5 Virtual Training Mistakes – Webinar Tips

Here’s a collection of things I’d rather avoid when delivering virtual training, either observed while attending sessions, or experienced through my own sessions and learned the hard way, turned into 5 webinar tips.

Enabling the webcam

After very interesting discussions with many L&D consultants and instructional designers, I concluded that having your webcam on during the entire webinar is a mistake. Your audience will get the impression that you are not paying attention to them, when in fact you are taking good care of them by keeping an eye on the 5 or 6 things you must constantly monitor during the webinar, even as you are talking. The effect is amplified by the use of multiple screens (see below) as you seem to be looking everywhere but to your audience. So switch the webcam off.

Still, connecting with your audience is important, especially if they don’t know you already. If you need to enable your webcam, do so only during those time intervals you can dedicate exclusively to the camera. Intros, summaries, and answering questions are potential candidates for webcam interaction. Place the cursor on the webcam disable button and don’t move it from there, so you can click on it without even looking. For everything else, disable the camera so you can focus on the right things and your audience feels that focus.

Providing only one input stream

By default I’ll assume that in a 60-minute webinar, every participant will have about 6 to 8 distractions. I’m not talking about potentially justifiable distraction sources such as a phone call – it’s simply that the mind wanders. Perhaps a slightly pessimistic estimate, but in line with some studies on attention times while browsing the web.

I believe you can’t prevent distraction. However, you can create additional input streams or “false distractions”, inputs that may take the participant temporarily away from the main presentation but still keep them “on topic”. How do you do this? Here´s a list of possible “on topic” distractions:

- Give handouts at the start of the session

- Allow chat (more on this later)

- Ask open questions that may require a web search

- Provide pointers to resources that complement the session. You can use URLs, but also things like QR codes that will ensure the participant’s phone is also “on topic”

Using only one screen

The amount of information and action you must oversee while running a virtual session cannot be managed comfortably with just one screen. I have only run one webinar with a single screen, and that was my first. All modern computers, including laptops, can handle a second screen. Some can have a third screen plugged in, and if I had that capability, I would use it too. Typically I have one screen fully dedicated to the virtual delivery software, and the second one holding my session script, my session notes, the chat windows and supporting software such as screen capture. My third screen, if I had one, would keep browsers and apps with all the documents I want to show, already pre-opened and ready to go. Lacking that third screen, these all go minimized until needed on my second screen.

Not allowing chat

I have learned the hard way that chat is a universal right and an integral component of virtual learning. My mistake wasn’t exactly not allowing chat, but restricting it to a few moderators because of other considerations (instructional, technical, topology) that I thought were more important at the time. They were not, and that became clear not during the session but when gathering feedback afterwards.

In hindsight, I wouldn’t have changed the moderator setup, which was designed to allow face to face breakouts and some contingency in case the delivery system failed (Skype was my backup). But I would have allowed open participation in a parallel chatroom. There are a few good reasons for using local moderators; one of them is avoiding virtual breakrooms (see why) in favor of face to face ones.

Thinking it’s over when the webinar is over

Online delivery is a demanding task, and at the end of the session you may feel exhausted. But before calling it a day remember that learning is not time-bound – it’s an experience that doesn’t switch off like WebEx or Lync. You have a small time window at the end of the session where you still have a chance to reclaim your participants’ attention and keep them engaged with the material. Review attendance, then send that email (already drafted of course) out immediately, providing continuity to whatever learning program you are supporting. The 60-90 minute webinar you just completed is most likely a small part in a larger learning program, so ensure participants understand how it fits in the overall picture and what’s next for them. A webinar without follow up is a webinar quickly forgotten. Even if this is a self-contained program, you still need to get feedback while the webinar experience is fresh in their minds.

More Webinar Tips?

This covers some high-level operational aspects of running webinars. In a future post I’ll write about webinar tips for learner interaction. Any tips you would like to share?

Long-term L&D Planning

Tighter budgets and the pressure to “become more agile” are making L&D teams nimbler and pushing them to achieve great efficiency gains. But the process may also lead the team to inadvertently lose sight of the horizon, and that loss can easily cancel any cost benefits gained in this or the next quarter. How to bring balance? Here are five tips to long-term planning in an agile world.

1. Build a SME strategy

Sourcing external subject matter experts is expensive. Although external sourcing gets the job done and sourcing is as easy as cutting a new purchase order, you are probably feeling the pressure to cut costs. Define a strategy for transitioning from external to internal SMEs. You will never be able to source everything internally, and detailing what is likely to remain outside should be part of the plan. The business value of this transition plan may not always hinge on cost efficiency: the time “borrowed” from internal employees may be as costly, or more, than sourcing an external SME. The true value proposition is related to knowledge transfer. By having a reliable internal SME sourcing plan, you are demonstrating:

- Effective knowledge transfer

- That your learning department is actively managing that knowledge transfer

- The organization’s knowledge maturity, evidenced by reduced reliance on external sources

2. Choose tools and technologies for the long run

You just watched a demo of a new system that could host/author all your elearning. It brings innovative features, and although your  department will have to make a few adjustments to make full use of those, it all looks great on paper. We should experiment with new technologies and bring the best out of them, but I have seen some of these solutions cause serious overhead to the learning department, and… yes, long-term cost commitments. Consider the following when evaluating new tools and technologies:

department will have to make a few adjustments to make full use of those, it all looks great on paper. We should experiment with new technologies and bring the best out of them, but I have seen some of these solutions cause serious overhead to the learning department, and… yes, long-term cost commitments. Consider the following when evaluating new tools and technologies:

- Can it be hosted on your existing infrastructure? If not, what are the running costs?

- Upgrades: Who controls upgrades? What’s the upgrade cadence? What’s the cost? What happens to your existing content in an upgrade?

- Support: How quickly can you get help if things are not working as expected? Will support be an extra cost next year? What happens to support if you decide not to go ahead with that new upgrade?

- Exit strategy: When you decide to move to another solution (yes, it will happen) can you export your existing content and keep using it in another platform? What’s portable, what’s the cost of that portability, and what will be lost?

3. There is no “Delete” button, create one now

Your team has adapted to the demand for faster, leaner production of learning solutions and job aids. You have embraced social corporate learning, and great material just keeps coming; your team has never been so prolific. Employees find what they want, satisfaction scores for L&D are up, reports look great. There is only one problem, and it may not be that obvious right now: there is no “Delete” button. Sooner or later, you are going to face the task of managing obsolete content and a web of cross-references.

Your team has adapted to the demand for faster, leaner production of learning solutions and job aids. You have embraced social corporate learning, and great material just keeps coming; your team has never been so prolific. Employees find what they want, satisfaction scores for L&D are up, reports look great. There is only one problem, and it may not be that obvious right now: there is no “Delete” button. Sooner or later, you are going to face the task of managing obsolete content and a web of cross-references.

Make sure you plan for maintenance before maintenance becomes a problem. For each new learning solution, document expected shelf life, action needed by the end of its shelf life (update, discard, merge, etc.) and dependencies such as prerequisites, learning solutions known to point to this item, and learning solutions referenced within this item. Put that in database format so you can quickly search for an item and understand the implications of retiring or modifying it, and also pull reports about maintenance required one or two years from now. Handy during budget planning time.

4. Plan designs for the worst possible delivery scenario

You have a group of employees based locally, where face to face delivery is not only practical but also the easiest option. But your learning solutions must also reach out to mobile and geographically dispersed employees. Avoid the easy route of creating face to face designs and then hope that they will be somehow adapted to other scenarios. Start with the worst possible one – it is usually easier to repurpose solutions for the classroom than the other way around. You will also start with the one solution that works everywhere, even if you are out of budget or time to repurpose for classroom in the future.

5. Look outside and save

It is sad to see the “not invented here” syndrome in action. But have a quick look around: we are surrounded by high quality content. Yes, it is external; no, it wasn’t created here, but it is still great content. Of course there are learning solutions that for legal, compliance or competitive reasons will always be created in-house. But seriously, how many “Presentation Skills” courses can be put together, and how much of a competitive advantage can such a course be to any organization? Save budget, resources and accelerate solution delivery by leveraging great content that exists outside. Pay attention to the licensing and use accordingly; there is a vast amount of quality content released under Creative Commons with attribution as the only and very reasonable requirement. Where publication of derivative works is required, consider that possibility as a way to build your team’s reputation outside.

Personas in elearning

Personas are fictional characters that reliably represent a target demographic. Personas are commonly used when designing products and software to bring a tangible, detailed description of the typical user to the makers of those products.

Designers use personas to understand product needs, the most likely uses of a product, how it should be tested to ensure user satisfaction. Thanks to personas, the user is present in all phases of the product’s design, and that presence helps shape design decisions. It is an undisputable source of information in case of opinion differences among designers; it’s the voice of the customer. It ensures the final product will provide the experience users are looking for.

a product, how it should be tested to ensure user satisfaction. Thanks to personas, the user is present in all phases of the product’s design, and that presence helps shape design decisions. It is an undisputable source of information in case of opinion differences among designers; it’s the voice of the customer. It ensures the final product will provide the experience users are looking for.

In learning, the increasing abundance of quality content will shift the attention from content to experience. Because good learning content will be available from many sources, learners will be more selective based not so much on content but on learning experience. This is where personas can help.

But how to create personas for elearning? What are common features of a persona description? Are there examples available? Here are 5 tips and 5 persona examples.

- Personas are based on research. This research cannot be limited to market and demographic segments, because that type of information does not provide enough detail to build a persona. Although market information is useful and can help you find patterns and prioritize your research, you will most likely need to interview at least a few users, and then validate hypotheses from those interviews through quantitative methods such as surveys. Most likely this research work can be reused for more than one solution, so it’s time well invested.

- Personas contain just enough detail to avoid ambiguity. This means that in addition to obvious personal features such as gender and age, you will have to describe personal preferences – for example, in a marketing setting it could be that a persona is “more interested in quality than affordable prices”. If you find yourself compromising on the description of a persona, it is probably a sign that you need to write two and choose which one is more important. If in doubt, refer back to research data.

- Personas don’t contain unnecessary detail. Personal details, preferences and motivations are there for just two purposes: convey meaningful research work that should influence design, and help designers develop user empathy. Anything else will simply distract the team.

- Persona documents are concise. We want to present credible characters to the team. But personas are just a design tool, and as such they have to be usable. A compact persona document of one, or at most two pages contains enough information for most purposes, it’s easy to print out and incorporate into charts, sketches and other design work.

- Personas have personal goals. Why do people learn? What is a successful outcome for them? As much as we’d like success to be measured as “completion of elearning course” (which is a task), this may not be exactly what people are looking for (which is the goal). Detailing personal goals will help design and measure the success of learning solutions.

Sample personas

- Students and staff, University College London

http://www.ucl.ac.uk/isd/staff/websites/sample-personas

- Undergraduate students, NCSU Libraries

http://learningspacetoolkit.org/wp-content/uploads/2011/10/Personas-undergrad-061511.pdf

- Website users

http://www.w3.org/WAI/redesign/personas

- US Department of Agriculture

http://www.usability.gov/how-to-and-tools/methods/personas.html

- Accessibility personas

http://www.uiaccess.com/accessucd/personas_eg.html

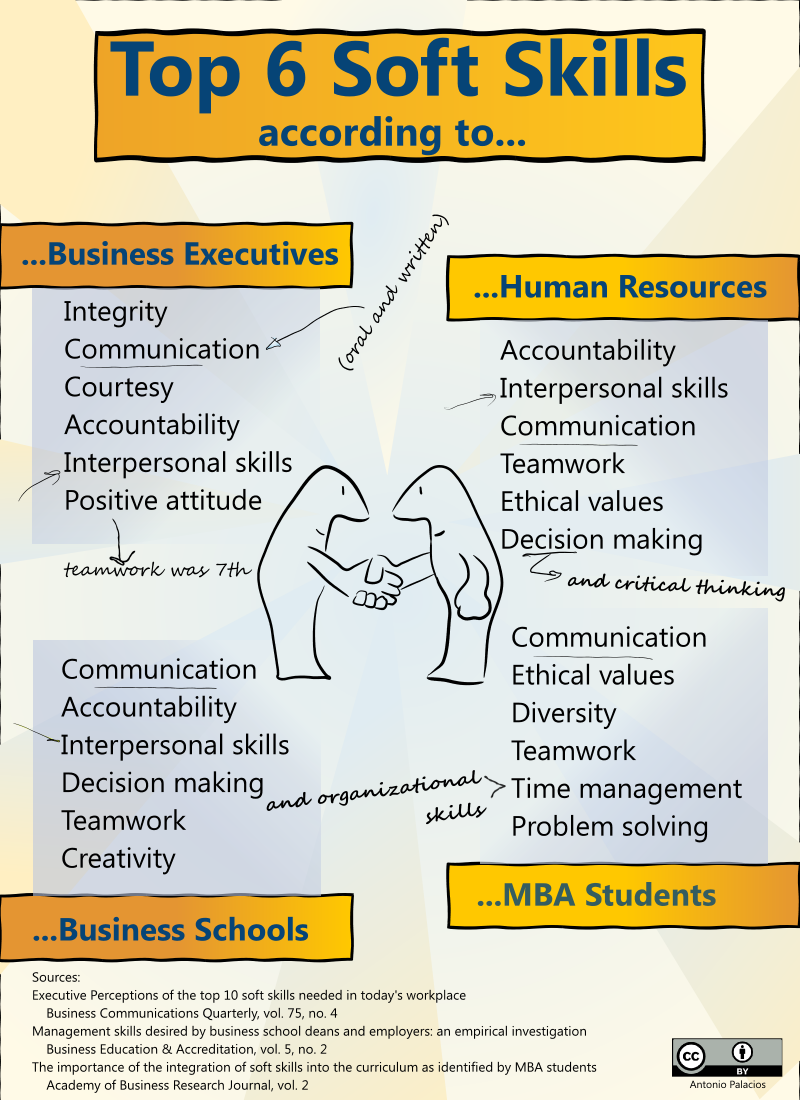

3 Questions that Help Manage Training Costs

It may seem counter-intuitive, but improving training within the organization often involves convincing your business that they don’t need training. Here are three questions that may help avoid unnecessary training. Or, if training is really needed, help ensure that it is effective.

These questions are a great approach in those scenarios where the training request you are receiving from the business is very simple and straightforward. It could be something like:

“We need you to run a course on refactoring. A group of developers has to refactor some existing code we have”

or

“Please put together a course on collaboration for us. The sales team members are not cooperating with each other”

As Hale (2006) points out, training departments are “way down the information chain”, and “generally called upon after others have already decided on a solution”. You may have little room to present a case for revising the conclusion, even though you understand that where business has clarity is on the gap, not on the solution. The gap is what they have observed: “The sales guys are not cooperating”. What we don’t know yet is if the course they are asking for is the best possible solution.

Although a full revision may be entirely out of your hands, it is much easier to ask if the “fundamentals” for providing the requested solution are already there. Here’s when the three questions come handy:

1. Do people know what is expected of them?

This, of course, goes beyond the specific request, such as “we need you to collaborate more”. It is about a clearly articulated, coherent and convincing message that links goals and vision with specific actions. Answer to question 1 is affirmative if the business routinely communicates -and possibly in some cases over-communicates- expectations related to this specific training request.

2. Do they have the right tools and resources?

Take this in a very broad sense, starting with tangibles such as desk space, light and a comfortable temperature and not so obvious resources such as a usable user interface in applications. I once heard a story (unverified) about a company that organized typing lessons for all mid-managers, following poor performance in completing a required data entry task. Most managers could type fairly well, but were discouraged by a particularly badly written application interface. Training was not the answer.

3. What are the incentives?

What happens if people do what is asked? What happens if they don’t? This is much more subtle than salary and bonus. It can be about pride, about a sense of accomplishment or about the colleagues next door. A production manager once asked me to set up a refactoring course for his programmers. “Refactoring” involves looking at previously written computer code and redesigning it to make it better, although the code will continue doing exactly the same thing. When I asked the third question, it became clear that his programmers thought that refactoring was “uncool”, because it doesn’t produce new functionality and the team next door would be working on new code. Needless to say they were all perfectly qualified to refactor and didn’t need training, but the value perception and therefore implicit incentives were not there. The issue was solved through communication and clarifying recognition, not through training.

If you don’t receive satisfactory answers to every question, try to build a case against training based on cost and the lack of requirements for change to happen. If all questions are covered, then training will have a much better chance of driving actual change.

Hale, J. (2006) ‘The Performance Consultant’s Fieldbook: Tools and Techniques for Improving Organizations and People’, Pfeiffer, 2nd edition.

Measuring Learning Transfer

How do we measure training, and why? Are L&D departments tracking, measuring and reporting meaningful learning data, or just data that makes a solid case for their own s urvival?

urvival?

It’s not easy to determine learning effectiveness and learning transfer. But sometimes I wonder if the metrics we usually gather and when we gather them – learner satisfaction right after the end of the course – are good predictors of learning transfer or simply a good Kirkpatrick’s Level 1 study. Yes, satisfied learners will come back for more, but isn’t that a bit of a brute force approach?

Rummler, Brache, Hale and other Human Performance Technologists remind us to take a systemic look, and when I see the data that is usually collected as part of a learning project, I wonder if we should be teaching a bit less and measuring a bit more. More about the system, to be precise.

In a study of corporate learning efficiency published in the American Journal of Distance Education, Gunawardena et al. (2010) suggest that collegial support is the strongest predictor of learning transfer. This is the result of a study conducted in a multinational delivering a highly technical online learning program. Collegial support is part of the system rather than the learning solution alone, although there is much that can be done at the learning solution level to support it.

What do they mean by “collegial support” exactly? These are the questions that encapsulate collegial support:

- My colleagues encourage me to implement what I have learned in this course

- I share what I have learned with my colleagues so that more employees benefit from my learning opportunities

- I have worked together with my colleagues in troubleshooting complications when implementing the newly acquired skills

In this same study, learner satisfaction was driven by factors that were unrelated to learning transfer. Specifically, the main predictor of learner satisfaction was self-efficacy, understood as the ability and confidence to use the online learning solution.

The findings of this study seem to point to a different set of metrics that are not that difficult to capture, although perhaps a bit unpopular in environments where individual performance has taken such a prominent role that collaborative attitudes have lost all incentives.

In another study of corporate online training, Joo et al. (2011) conclude that organizational support has a direct effect on learning transfer. Again, another system-level, not learner-level variable. Here, “organization support” was defined as “supervisor support, peer support and organizational culture”. It is tempting to assume that such variables are constant across the company, but having supported many teams in multiple countries across Europe, I can confidently say this is far from constant, and depends not only on country culture but also division, department, and team culture.

Learner satisfaction is great to have. But are you measuring the right things?

By the way, if you believe that collegial support is the best predictor of learning transfer, what do you think of “social learning” in the corporate space?

Gunawardena, N., Linder-VanBerschot, J., LaPointe, D., Rao, L. (2010) ‘Predictors of Learner Satisfaction and Transfer of Learning in a Corporate Online Education Program’, American Journal of Distance Education, Vol 24, Issue 4, 2010, http://dx.doi.org/10.1080/08923647.2010.522919

Joo, Y, Lim, K, & Park, S 2011, ‘Investigating the Structural Relationships among Organisational Support, Learning Flow, Learners’ Satisfaction and Learning Transfer in Corporate E-Learning’, British Journal Of Educational Technology, 42, 6, pp. 973-984, http://onlinelibrary.wiley.com/doi/10.1111/j.1467-8535.2010.01116.x/abstract

10 Tips for Global Training Instructors

Reading “10 Tips for Global Training Instructors” in Trainingmag.com inspired me to write my own 10 tips drawing from my experience in preparing, adapting, helping other instructors and watching them teach, and talking to learners in various countries across Europe. I slightly disagree on a couple of points, but for the most part I am building on what I read at Trainingmag.

1. Do not trust your content

If you are taking your content to a new country, then every slide, every paragraph, every graphic requires a good review. Best case scenario, there are some colloquial terms that will get your audience confused. Worst case, you will offend them. Making a training program global is not a simple task. Far from consisting of a full scan for idioms (“right off the bat?”) that may not register well, it is a task that involves deep knowledge of the target culture, including what may be offensive. Stereotypes that are safe to use locally are your enemy. Graphics depicting hand gestures as harmless as a thumbs up can go wrong. The colors you thought had universal meaning. There isn’t a single list that captures everything that must be checked to ensure your content will travel. My advice is to do a full review with a trusted colleague based in the target country or region.

2. Adapt your training kit

Handouts, props, prizes… Your known and trusted training kit may need some adjustments. What is the paper size of your handouts? Letter, Legal, A4? Is that what your audience expects? Will it fit in their folders, along with handouts produced locally? Are the props you carry recognized universally, or do you need to research new ones that carry the meaning intended when first creating the original course? Does your audience understand what a 3×5 index card is? Will the bowl of candy work equally well as a quiz prize?

3. It is possible to overdo welcomes

As well intentioned as it may be, personally greeting every participant and asking them for their name as they enter the class could be a source of embarrassment for some individuals and an inhibitor for interaction when you need it later during the session. Perceptions of how approachable a trainer should be change from country to country.

I have also seen trainers trying too hard to pronounce local names, and taking what should be a brief, nice welcome greeting into pointless language lessons. Frankly, it is OK if you cannot pronounce Michael in Danish, and Michael is already expecting that you will pronounce his name in English and won’t have to explain yet again how it’s said properly. So unless you have enough practice to do relatively well, a humble remark about your lack of local language skills grants you forgiveness to mispronounce names and lets you focus on the training work at hand.

4. Ground rules must be compatible with local culture

You can set ground rules at the beginning. If they are too far from accepted local custom, prepare yourself to see them fail, despite your best efforts. For example, you may say that you don’t want questions or interruptions until the end of a section. You may set a break of five minutes, and expect everyone back by the end of the break. You may say that participation in the class is expected from everyone. I have seen those rules fail in various places. Do you know which is more likely to need tweaking if you go to Ireland, Israel, Japan?

5. Your intro may need changes

Typically, US trainers start introducing themselves by talking about their past experience and include achievements that may be relevant in order to establish credibility. I have seen these intros delivered in Europe, and after two minutes, they start feeling like shameless self-promotion. Go longer and the intro may start working against you. For the same reason, European instructors may not do the best job when introducing themselves to US audiences. It is worth doing some research, understand what works well in establishing credibility and adjust accordingly. In many places, the fact that you are the instructor could be enough.

Bowl of candy. Nice ice-breaker and prize for quizzes in the US. Will it work equally well in France?

6. Acknowledge your international ignorance

It is tempting to look for and put together some rushed examples that apply to international contexts better. If they are not going to have the same polish as the original content, then you may be better off saying that the examples you bring are from a different context and that you would like to discuss how to extrapolate to your audience’s area. Call it an exercise. You will get them motivated to contribute precisely where their local expertise crosses paths with the content you want them to learn. You can then use the output for your next delivery.

7. Humor is tricky

Humor is great and some instructors make very effective use of it, but as we all know, it is also a minefield. When traveling, use with caution. This is not about the usual boundaries we wouldn’t cross back at home – it’s about everyday humor that is regarded as safe where you work. History, culture, assumptions and recent incidents can turn a witty, humorous comment into an offensive or tasteless remark. Frequently, the effect is simply that the audience lacks the context to understand the joke, and the response is a cold, long, silence spell.

8. “Am I talking too fast?”

While it is important to be aware of pace and some trainers are definitely fast talkers and need to watch their speed, my experience is that in most cases, non-native English European audiences will be comfortable with your usual pace. My recommendation is not to discriminate between the overall pace of the course and your personal speech pace. Ask the audience about session pace as usual; in their minds, the question also applies to their ability to understand you and they will respond accordingly. The difference is that in this way your question cannot be interpreted as “is your command of the English language insufficient to keep up with me?” which can be answered as “of course not” simply to save face.

9. Involving participants

Involving the audience requires global knowledge. Eliciting comments from quiet participants may be awfully embarrassing to them, and create barrier with the entire group from which you won’t be able to recover. Sometimes it’s better to address tables and not individuals. In Asia you may consider relaying questions through technology, a proxy, or setting up a question box that can be checked after breaks, for example. My experience in Europe is that I get as many questions during breaks as I do in the class, and I bring those back when we reconvene as anonymous questions.

10. Wrapping up

I mentioned you should not overdo it trying to speak foreign languages or try to “blend in”. But if there is one place where you can make that extra effort that will be recognized and welcomed, it’s at the end. Learn how to say “thank you” in the local language and end your wrap up that way. Make sure you learn the right form! In many languages, saying thank you to one, two, three, or more people, all same gender, or mixed genders requires different words.

Onboarding, elearning and tacit knowledge

Onboarding is probably one of the best examples of how you can “pit a good performer against a bad system, and the system will win almost every time” (Rummler & Brache, 1990).

Picture this from the new hire’s point of view: They’ve just completed what was probably an exhausting interview loop, a day or series of days that can easily feel like some sort of endurance test. They have passed with flying colors, meeting or exceeding what is surely a high bar. Then, they are shown some videos (interestingly called “elearning”), given a short manual with basic resources and left at their new office. By the way, we are below in a couple of KPIs that will pop up in the next QBR, please see what you can do about those.

And it is likely that our wonderful shift to elearning is making matters worse. What used to be in-person onboarding programs that had at least the value of making new hires meet a few collegues who were going through the same transitional stage, now elearning is eliminating much of that face to face interaction and leaving new hires to fend for themselves.

The system wins

No wonder that, as Rummler and Brache suggest, the system will eat them alive. Based on my experience, I wouldn’t go as far as suggesting that onboarding alone can claim the best part of that 25% turnover figure (Employee Turnover Caused By Bad Onboarding Programs). But I do agree with the investment imbalance they describe, where hiring is put at $11k, while onboarding is described as a zero dollar effort in some companies. The assumption is that if the employee met the bar at our notoriously rigorous selection & interview process (“pride” smiley goes here) then they are perfectly capable and will learn the rest on their own. That’s where bad elearning comes in: A few resources will be thrown covering some basic skills and internal tools, that’s about it. The issue, however, is that onboarding is more than just “learning”. In fact, by passing the interview loop, most new hires have already demonstrated that their learning abilities are well above average.

But onboarding does not mean just providing some learning. Alexia Vernon covers a good list of common onboarding mistakes, and while each of the five mistakes described is great advice, I’m picking number 3 for this post, “Failing to address culture fit”.

I don’t know you that well

Is it possible to determine the probability of a good fit at interview time? How do companies claiming to have this ability avoid the building of communities that fall too often into groupthink patterns? Isn’t the ability to detect “good culture fits” synonym with poor diversity of some kind? I can’t claim that ability. I never thought I could use it at interview time. It’s simple: I don’t get to know enough of an individual through the selection process, and they don’t get to know the culture they are supposed to be part of until the contract is signed.

This, again, is the system blaming the individual. In advance. We are blaming them of being unable to cope with a situation that has not happened yet. So I’m going to move “good fit” out the of selection and place it where it belongs: onboarding.

Four tips to embed culture in onboarding

Although every onboarding process may include some knowledge transfer, in person or through elearning, understanding culture must be part of a well-balanced mix, not only in terms of content but also timing and sequence.

1. Making company values real

Sure, the onboarding program probably has a nice professional video talking about company values. But there is nothing like talking about company values and then finishing by saying: “For example, Tom in floor 4. He exemplifies what we mean by open communication in this company. Let me set up a meeting with him, you both should have a chat”. Look around your office. Can you easily find behavior that exemplifies your company values? If so, does your onboarding program connect new hires with the individuals that make those company values living values?

2. Setting a personal networking goal

That means something like “by the end of the onboarding program, you will have X new individuals as part of your personal network”. Add your details here: for example, in order to obtain a balanced network you can suggest that new contacts have to be linked to specific competencies that are applicable to the new hire’s role. Those connections could be established among employees, external individuals, or customers.

Networking is your friend. Networking will teach new hires what videos and classes can’t. It will teach them tacit knowledge. I love watching videos with John Seely Brown talking about tinkering and tacit knowledge, and I find them so relevant to onboarding. Individuals must learn to join. The most important learning happens when things (obtained by lecture, elearning, reading or otherwise) are getting integrated in my head. This is not necessarily a conscious process, and it won’t happen in the classroom or watching a video. It happens by being constantly exposed to tacit knowledge, the fabric of a culture and a way of working, things that are so hard to explain because they are in everyone’s minds and so deeply assumed that nobody knows how to articulate or even surface them. Onboarding can accelerate culture understanding by creating opportunities for tacit knowledge transfer. You can’t convey it, but you can facilitate it.

3. Assigning buddies or mentors

This point simply expands on the need for tacit knowledge. Many onboarding programs assign a “buddy” for practical purposes, but there is a hidden value in having a new hire pair up with a colleague and being able to observe how they behave in various contexts, even in a shadowing capacity. To maximize tacit knowledge, ensure your onboarding program has a number of tasks or assignments that must be completed, covering areas relevant to the role, with their buddy. This, by the way, could be partly provided through virtual communities or corporate social networking.

4. Setting a safe first job assignment

How does failure look like in this company? And success? Can we create a safe first assignment to experience them? One where it’s OK to ask questions and be tentative? One where we can experience our first real feedback loop? After all, the onboarding program is going to take about 90 days, and during that time, you are not always expecting full performance from hew hires, so a sandboxed assignment is a good compromise that still aims for productivity. Yes, I know. That requires manager support, and as Sylvestre-Williams points out (Why Your Employees Are Leaving), such support is not always out there. But that would probably take us to metrics, best left for another post.

Rummler, G., Brache A. (1990) “Improving Performance: How to Manage the White Space on the Organization Chart”, John Wiley & Sons.

LX: Shifting from Content to Experience

We are flooded by content. It is, literally, a world of information. And Google’s mission, apparently, is to “organize the world’s information and make it universally accessible and useful”. I take note that Google assumes “the world’s information” is worth accessing.

It is true that by democratizing broadcasting we pay the price of useless content. But those who have something meanginful to say are broadcasting too, and through informal peer review systems powered by social networks, corporate search engines like Google and other means, the quality of the information that can be obtained through the Internet is constantly improving. Google has it right.

Learning content is no exception. Masterpieces are to be found in blogs, YouTube, wikis, and of course universities that have finally taken the leap and started moving their content online. Coupled with content growth is a decrease in its price; it is much easier to produce a great piece of learning content today than 5 years ago. And many talented individuals are doing that – for a fee, but also for free.

This is good news for learners. If today I can access a course on sensory-neural systems from the Astronautics department of the Massachusetts Institute of Technology, for free, what is next? We are entering an era of top quality learning content oversupply.

For learners, this is a buyer’s market. We can afford to be picky. In fact, I believe that soon learners are going to become very selective about their learning. And having consistently good quality content, I don’t think their criteria will gravitate around quality, but around experience. I know I can learn from a number of sources, but which one will help me learn and have fun too?

Considerations that now are perhaps not high priority for many learning organizations will surface to the top:

-

Can I use my favorite personal device to learn?

- Can I roam from device to device to suit my lifestyle?

- Am I going to meet fellow students, and will the interactions proposed be compatible with my learning style?

The attention is going to shift from content to experience, or more specifically learning experience, LX. It is even possible that LX will influence reputation. After all, reputation is driven by demand, and a great LX will see learners flocking to the best provider.

Do you see the signs of the shift to LX in corporate learning? While in the past there was great emphasis in creating good classroom content, today it’s less raw content production and more content curation. Prolongued sessions in training rooms giving way to more nimble learning nuggets for just in time learning. Less sequential prescriptive guidance and more “paths” to navigate freely. Is the ship slowly changing course towards LX?

The Death of ILT

Will instructor-led training (ILT) be completely eliminated from organization training programs? It’s a question that pops up often, both from learners and training staff.

I find this question annoying. Not because I think it’s without merit – it is an important discussion in a time of change. But I tend to lock down on the subtleties of the terminology and usually come to the conclusion that we have a communication and assumption problem before we even start solving mystery deaths. Learning is about communication, so it’s important to be clear about what it is that we are discussing here.

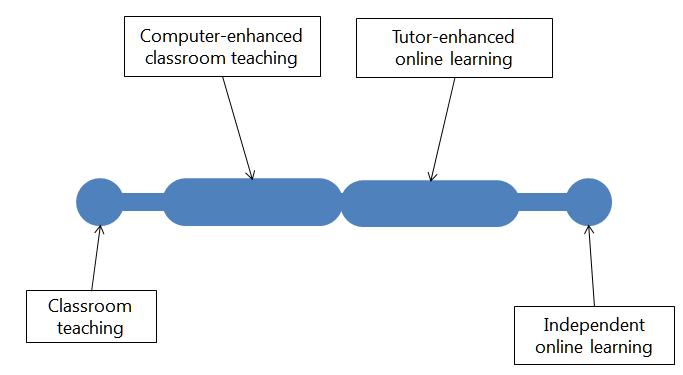

Competences for Online Teaching: A Special Report (Goodyear et al, 2001) provides a summary of a conference on the subject of online learning and competencies. The paper includes a figure that illustrates four broad areas along a horizontal line: classroom teaching, computer-enhanced classroom teaching, tutor-enhanced online teaching, and independent online learning.

Rather than two discrete ends and two contiguous intervals as illustrated in the paper, I think of trainer involvement as a decreasing but progressive along the graph. At the far right, “independent online learning” still has the trainer “leading”, in the sense that learners may be watching a video or completing an activity independently, but that activity or lecture is still led by the instructor. Sill traces of ILT?

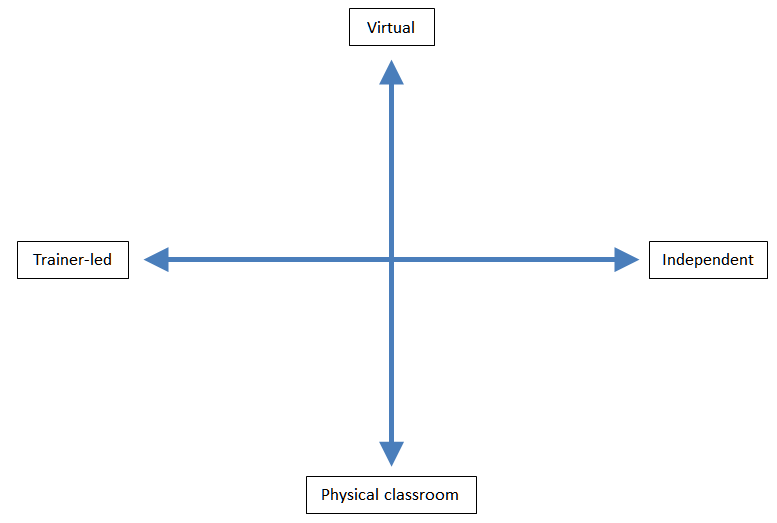

There is something else implied in that line, something that impairs our ability to understand the full range of learning scenarios. The assumption is that as the instructor’s involvement fades, physical presence also fades. But let’s assume for a second that physical presence is not a function of the chart above, but a new orthogonal variable. Let’s introduce that variable in a chart and see what we get.

I would argue that there is a plausible learning scenario in every location within these quadrants. Let me go to the bottom right corner, for example: minimal trainer involvement, classroom experience. Think about a group of children playing in a playground. They are receiving absolutely no direction, but they are actively learning from others. They are doing so in their “classroom”, the playground. “Networking sessions”, as we call them sometimes, fall roughly around this area of the chart too.

Let’s move to the top left of the chart. I have witnessed wonderful examples of trainers taking a large group online through an effective learning experience. Great participation, great interaction, 100% trainer-led, but happening virtually across several countries and cultures. Some of these are called “webinars”, and in my training dictionary, this is also ILT, instructor-led training.

Our terminology is flawed. ILT is perceived as antagonist to WBT, when in fact most WBT is also ILT. So are we about to witness the death of ILT? I think ILT is alive and well, and will do well in the future too.

Of course we may be asking this question for a very different reason: perhaps our business model or our employment is linked to ILT coupled with physical presence. That combination won’t die either, but it is just a thin line in a big and exciting chart. Why confine the business there?